Executive Summary

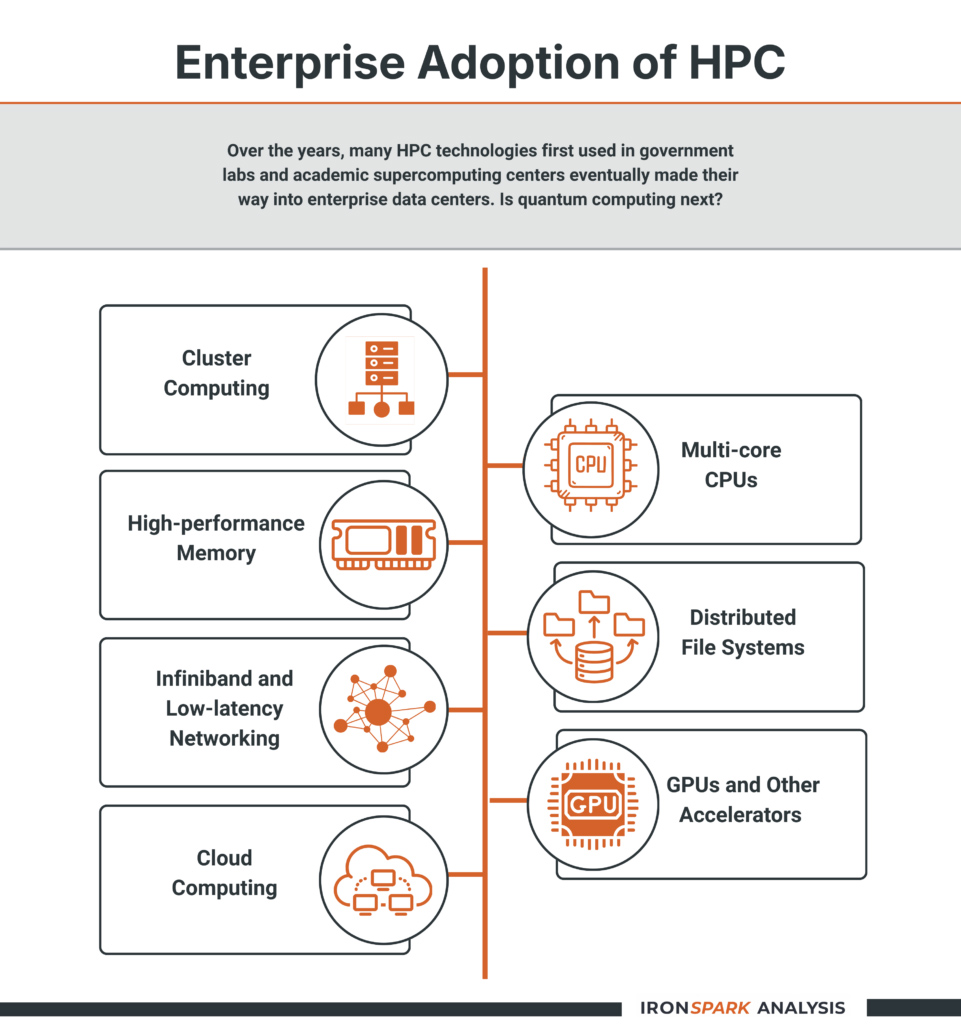

Interest in quantum computing is rapidly shifting from its traditional application areas of cryptography and ultra-secure communications to high-performance computing (HPC). Recently, conventional HPC infrastructure providers such as NVIDIA, IBM, and Cisco, among others, have announced technologies to bring quantum computing to the HPC market.

The announcements all point to one thing. Academic and national laboratory supercomputing centers will soon adopt quantum computing, the latest HPC technological advancement, to accelerate their workloads and speed computational results.

If the past is any indication of the future, a successful embracement of the technology in these environments will be the first step to making quantum computing part of future enterprise compute environments.

State of the Market

Interest in quantum computing is rapidly shifting from its traditional application areas of cryptography and ultra-secure communications to high-performance computing (HPC).

Recently, conventional HPC infrastructure providers such as NVIDIA, IBM, and Cisco, among others, have announced technologies to bring quantum computing to the HPC market. The announcements all point to one thing. Academic and national laboratory supercomputing centers will soon adopt quantum computing, the latest HPC technological advancement, to accelerate their workloads and speed computational results.

If the past is any indication of the future, a successful embracement of the technology in these environments will be the first step to making quantum computing part of future enterprise compute environments.

Key Insight

Tech vendors’ quantum announcements signal long-term commitment, not near-term enterprise disruption.

Tech Vendors Embrace Quantum

Several recent announcements show the industry’s interest in bringing quantum computing capabilities to the HPC marketplace. They include:

- NVIDIA announced NVIDIA NVQLink, an open system architecture for tightly coupling the extreme performance of GPU computing with quantum processors to build accelerated quantum supercomputers.

- IBM unveiled IBM Quantum Nighthawk, its most advanced quantum processor yet, and designed with an architecture to complement high-performing quantum software to deliver quantum advantage next year.

- Cisco, noting the need to scale quantum computing systems, announced software that enables distributed quantum computing. The company said it is taking a similar systems-level approach in this area, as it did with networking for traditional compute systems.

Another intriguing announcement came from Horizon Quantum Computing. The company announced Beryllium, a hardware-agnostic, high-level, object-oriented language for programming quantum computers.

Reality Checks

Despite the new attention on quantum computing from these stalwart HPC companies, the industry will have to address many issues if quantum computing is to become a factor in the marketplace.

Scalability

Current systems lack the logical qubits required for production workloads.

Scaling quantum computing systems is one of the field’s most fundamental challenges. While prototype systems with tens or hundreds of qubits exist, achieving the thousands to millions of logical qubits required for fault-tolerant, commercially useful computation is extraordinarily difficult.

Physical qubits are highly susceptible to noise, decoherence, and operational errors, which necessitate extensive error correction. That often requires hundreds or thousands of physical qubits to create a single reliable logical qubit.

As systems scale, the complexity of qubit control, cryogenic infrastructure, calibration, and interconnects grows nonlinearly. These engineering constraints make it unclear whether quantum hardware can scale economically or operationally in the same way classical HPC systems have, raising questions about timelines for large-scale, general-purpose quantum advantage.

Commercial Viability

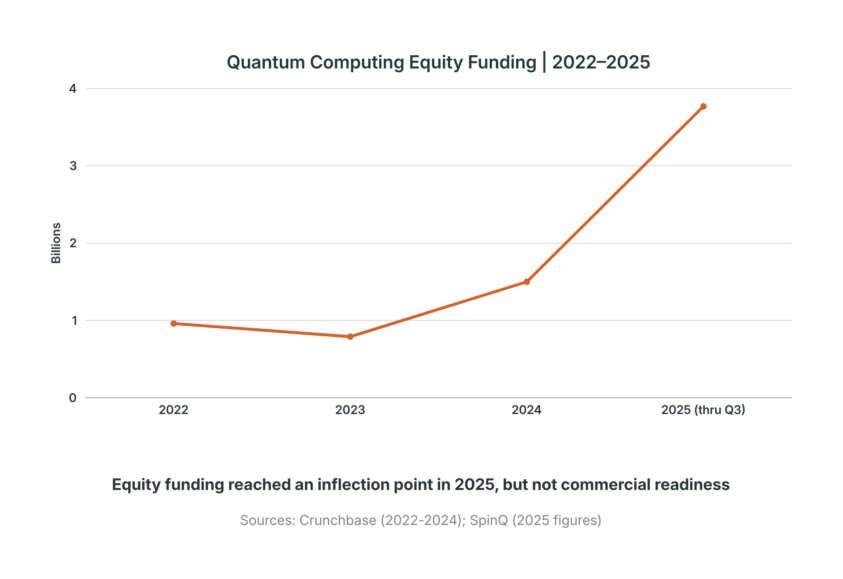

Long timelines and funding risk remain significant.

From a market perspective, quantum computing faces a precarious funding and commercialization challenge. Most quantum vendors remain heavily dependent on venture capital, government grants, and strategic partnerships, with limited near-term revenue opportunities.

Seed and early-stage funding have been sufficient to demonstrate scientific progress. Still, it is far less certain whether they will sustain the long, capital-intensive journey to commercially viable products. That creates a real risk that companies with technically promising approaches may fail financially before the technology matures.

Quantifiable Benefits

Classical HPC and AI continue to advance faster and at lower risk.

Perhaps the most important open question is proof of value. Will quantum computing deliver performance improvements that are both meaningful and cost-justified compared to conventional HPC?

Many of the problem areas targeted by quantum computing, including optimization, simulation, and machine learning, are also seeing rapid advances in classical architectures, including GPUs, specialized accelerators, improved algorithms, and hybrid HPC–AI systems. In some cases, classical methods may close much of the performance gap at far lower cost and risk.

For quantum computing to succeed commercially, the industry must demonstrate clear, repeatable, and application-relevant technical and economic advantages over conventional HPC techniques. Until such advantages are proven in real-world workloads, quantum computing is likely to remain a complementary, experimental technology rather than a disruptive replacement for classical high-performance systems.

Quantum Computing in Support of AI

Quantum computing has the potential to enhance machine learning (ML) and artificial intelligence (AI) primarily by accelerating the underlying mathematical operations that dominate training and inference workloads.

How does quantum computing help? Quantum algorithms, in principle, can perform certain linear algebra operations exponentially faster than classical methods by exploiting quantum superposition and interference. While practical speedups depend heavily on data encoding and error correction, even small improvements could significantly reduce the time and energy required to train large models, particularly in HPC environments where AI workloads already consume vast computational resources.

A second area of promise lies in quantum-enhanced optimization, which is central to both traditional ML and modern deep learning. Training a model is fundamentally an optimization problem: finding parameter values that minimize a loss function across a complex, high-dimensional landscape.

Classical gradient-based methods can struggle with local minima, flat regions, or slow convergence. Quantum approaches could explore these landscapes more efficiently, potentially identifying better solutions with fewer iterations.

For large-scale AI models, this could translate into faster convergence during training, reduced reliance on brute-force hyperparameter tuning, and lower overall compute requirements.

Near-term Path: Hybrid Workflows

The most realistic impact comes from hybrid quantum–classical workflows, not fully quantum AI systems. Think GPU-style acceleration, not replacement.

Quantum computing may also enable new forms of machine learning that are difficult or impractical on classical systems. Quantum machine learning (QML) models potentially might operate in exponentially large feature spaces using relatively few quantum bits, allowing complex correlations to be represented more compactly. Such a capability could be particularly valuable for pattern recognition, anomaly detection, and classification tasks involving highly complex or noisy data, such as molecular data, sensor streams, or financial time series.

Finally, the most realistic near-term impact is likely to come from hybrid quantum–classical workflows, rather than fully quantum AI systems. Similar to the use of GPUs today, quantum processors could act as accelerators, handling specific subroutines such as optimization steps, sampling, or feature mapping. At the same time, classical HPC systems manage data ingestion, control logic, and large-scale model orchestration.

Such a hybrid approach aligns well with existing AI infrastructure and could incrementally reduce training times or improve model quality without requiring wholesale changes to AI software stacks.

Quantum Computing HPC Developments

Recent quantum computing HPC developments and innovations that will drive the field in the coming years include:

Dozens of U.S. and international supercomputing labs announced they have integrated NVIDIA NVQLink technology for cutting-edge research. The U.S. labs include:

- Brookhaven National Laboratory

- Fermi National Accelerator Laboratory

- Lawrence Berkeley National Laboratory

- Los Alamos National Laboratory

- MIT Lincoln Laboratory

- National Energy Research Scientific Computing Center

- Oak Ridge National Laboratory

- Pacific Northwest National Laboratory

- Sandia National Laboratories

An additional 15 international supercomputing centers join these labs.

Other Quantum Computing / HPC News

DARPA anticipates announcing multiple awards for its Heterogeneous Architectures for Quantum (HARQ) program by February 1, 2026. HARQ seeks to transform how quantum computing systems are designed and scaled by moving beyond today’s one-qubit-to-rule-them- all approach. The program seeks to do this by leveraging advances in photonic integration, quantum interconnects, and quantum circuit design to overcome current scaling and performance bottlenecks in quantum systems.

IQM Quantum Computers and Telefónica announced that they have joined forces to sign a purchase agreement with the Galician Supercomputing Center (CESGA) to install two full-stack quantum computers in Spain. Under the agreement, IQM will deliver and install a 54-qubit IQM Radiance, designed for integration into high-performance computing centers, together with a 5-qubit IQM Spark system dedicatedto education. The systems are scheduled for delivery by June 2026.

IonQ announced the continuation of its strategic partnership with the Korea Institute of Science and Technology Information (KISTI) and forthcoming delivery of a 100-qubit IonQ Tempo quantum system. Under the agreement, IonQ will deliver its next-generation Tempo 100 quantum system to support KISTI’s hybridquantum-classical research initiatives. The system will be integrated into KISTI-6 (“HANKANG”), the largest high-performance computing (HPC) cluster in Korea, creating the first instance of hybrid quantum-classical onsite integration in the country.

The Connecticut State Colleges & Universities (CSCU) Center for Nanotechnology (CNT) announced a name change and expanded mission. The center will now be known as the CSCU Center for Quantum and Nanotechnology (QNT). This change reflects the state’s increasing investment in quantum technologies and highlights the center as a leader in advancing research and education in quantum technologies and nanoscale science across Connecticut.

Qilimanjaro Quantum Tech and Oxigen Data Center announced a new strategic collaboration to jointly explore how multimodal quantum computers can be integrated into commercial data centers, laying the foundations for the next generation of hybrid quantum infrastructure. The two organizations will work together to understand and define the requirements for integrating two highly advanced, interdependent systems.

Leaders from Florida’s technology and venture sectors announced the formation of Florida Quantum. This new statewide initiative aims to organize, attract, and accelerate quantum innovation across Florida. It is comparable to state-level quantum alliance initiatives launched recently in Maryland, Illinois, New Mexico, and Connecticut.

The Fraunhofer Industrial Quantum Computing Consulting and Testing Center (INQUBATOR) announced it will implement innovative, easy-access offerings to help industrial users get started with quantum computing. The program aims to enable companies to overcome the hurdles of using quantum computing. To that end, a central element of the INQUBATOR project is easy and cost-effective access to quantum computers from various manufacturers.

Additionally, the effort intends to identify and evaluate new application-related use cases where the use of quantum computers promises a foreseeable advantage. CERN hosted the Quantum Business Community (QBC) Summit, the annual gathering of the European Quantum Industry Consortium (QuIC), of which CERN is an associate member. The event brought together more than 100 participants. It featured nine panels and keynote speeches from industry leaders, providing a space to guide Europe’s next strategic steps in the emerging field of quantum technology.

March 2026 Update

A Reference Architecture for Quantum-Centric Computing

AI’s compute requirements keep growing. As noted in a previous post and in this report, quantum will play a role in the future. Specifically, the most realistic near-term impact is likely to come from hybrid quantum–classical workflows, rather than fully quantum AI systems.

Similar to the use of GPUs today, quantum processing units (QPUs) could act as accelerators, handling specific tasks. At the same time, classical HPC systems manage data ingestion, control logic, and large-scale model orchestration.

With such a model in mind, IBM launched a reference architecture for implementing what it calls quantum-centric supercomputing (QCSC). The new framework integrates the two types of computing (traditional HPC and quantum computing) and provides for shared resources.

IBM’s new QCSC architecture comprises four logical layers: hardware infrastructure, system orchestration, application middleware, and applications. It defines how quantum processors (QPUs) can work alongside GPUs, CPUs, ASCIs, and FPGAs in both tight and loose coupling scenarios. IBM proposes a quantum systems API (QSA) that acts as the programmatic boundary between the classical and QPU environments.

The architecture depicts scale-up coupling of a QPU and a classical environment for real-time access via a low-latency interconnect, such as RDMA over Converged Ethernet (RoCE), Ultra Ethernet, or NVQLink, according to a preprint of IBM’s new paper, “Reference Architecture of a Quantum-Centric Supercomputer.” The scale-up pattern is ideal for certain classes of problems that require the lowest latency and therefore the tightest coupling between QPUs and classic HPC resources, such as fault-tolerant error correction.

DARPA Expands Quantum Benchmarking Initiative

The Defense Advanced Research Projects Agency (DARPA) announced it is expanding its Quantum Benchmarking Initiative (QBI). The move is driven by two factors: The increased interest in quantum computing and the rapidly growing number of organizations offering solutions. Of particular interest are entrants with distinct approaches that have not yet been evaluated under QBI.

Organizations that QBI has not yet funded are invited to join under a new Stage A Quantum Benchmarking Initiative Topic (QBIT). The work builds on QBI’s ongoing effort to determine whether any quantum computing architecture can achieve utility-scale operation by 2033, meaning its computational value exceeds its cost.

Since its launch in mid-2024, QBI has evaluated approaches from 20 commercial companies spanning a variety of qubit architectures. Eleven organizations have advanced to Stage B for deeper technical risk-reduction and development planning. Additionally, two performers from the Underexplored Systems for Utility-Scale Quantum Computing (US2QC) pilot program have advanced to Stage C, working with the government to verify and validate system-level operation.

Download Full ReportKey Questions for Enterprise CTOs

Quantum computing is transitioning from experimental cryptography applications to high-performance computing integration. Major infrastructure providers (NVIDIA, IBM, Cisco) have announced quantum-HPC technologies, signaling long-term industry commitment. However, enterprises should view this as a 3-5 year horizon, not immediate deployment. Current systems lack the scalability, commercial stability, and proven ROI for production enterprise workloads.

Not yet for general production use. Current quantum systems require thousands of physical qubits to create a single reliable logical qubit due to error correction needs. Academic and national laboratory supercomputing centers will be the first adopters, validating the technology before enterprise deployment becomes viable. The most realistic near-term application is hybrid quantum-classical workflows—using quantum processors as accelerators for specific subroutines, similar to how GPUs function today.

Three primary challenges exist:

- Most quantum vendors remain heavily dependent on venture capital and government grants with limited revenue streams, creating financial sustainability risk;

- The timeline to commercially viable products remains uncertain, with companies potentially failing financially before technology matures;

- Rapid advances in classical HPC and AI systems may close performance gaps before quantum achieves commercial advantage, limiting market opportunity.

Integration is occurring through hybrid quantum-classical architectures. NVIDIA’s NVQLink technology connects quantum processors with GPU computing for quantum-accelerated supercomputers. The most promising near-term applications involve quantum processors handling specific optimization steps, sampling, or feature mapping while classical HPC systems manage data orchestration and model training. This approach allows incremental performance improvements without requiring wholesale changes to existing AI infrastructure.

CTOs should monitor quantum developments but avoid premature investment. Key tracking points include:

- Vendor financial stability and funding rounds;

- Demonstrations of quantum advantage in specific, relevant use cases;

- Progress by academic/national lab implementations;

- Development of standardized quantum-classical integration tools.

The appropriate enterprise strategy is informed observation with small-scale experimentation partnerships, not major infrastructure commitments.

What Expert Are Saying

From our Q1 2026 Quantum Computing Live Discussion.

“It’s not just speed and scale – it’s complexity, and then a whole new way of thinking about data.”

Philip Russom

Data and Analytics Analyst, IronSpark Analysis

“A quantum computer is not likely to appear in an enterprise anytime soon – it’s gonna be delivered as a service.”

Joe McKendrick

People and Business Empowerment Analyst, IronSpark Analysis

“The Open Quantum Safe project has 1,694 contributors from 371 organizations.”

Elisabeth Strenger

Open Source and Responsible AI Analyst, IronSpark Analysis

About the Analyst

Salvatore Salamone

Chief Analyst, IronSpark Analysis

Salvatore Salamone brings 30+ years of experience analyzing technology and scientific developments across data infrastructure, high-performance computing, and emerging technologies. He has authored three business technology books and served as editor at leading industry publications including Network Computing, Bio-IT World, and RTInsights.

Connect with Salvatore on LinkedInWant Analysis Like This Delivered Regularly?

Subscribe to Salvatore’s Substack newsletter, ABR Intelligence Report, for weekly insights on artificial intelligence, business intelligence, and real-time data trends shaping enterprise technology.

Subscribe on SubstackNeed Custom Research for Your Market?

IronSpark creates analyst-backed research reports, market analysis, and thought leadership content for data and analytics vendors.

Explore our research services